Author: Dr Oskar J. Gstrein and Dr Andrej Zwitter, RUG

University of Groningen, Campus Fryslân, Governance and Innovation

One of the four Focus Areas of CCI is Predictive Policing (PP). This can be defined as ‘the collection and analysis of data about previous crimes for identification and statistical prediction of individuals or geospatial areas with an increased probability of criminal activity to help develop policing intervention and prevention strategies and tactics’ (Meijer & Wessels, 2019, p. 1033). While this algorithm-based application of automated decision-making is relatively new, there is considerable debate about its usefulness, fairness, legitimacy and effectiveness (Richardson et al, 2019).

In the CCI project, Law Enforcement Agencies (LEAs) from the Netherlands and Lower Saxony in Germany are leading on predictive policing. The objective of the project is to design and develop innovative ‘toolkits’ for LEAs, which are based on a ‘state-of-the art review’, requirements capture research with end users, as well as a study on ethical, legal and social challenges of predictive policing (Gstrein et al., 2019). This puts the CCI approach in contrast to the practice of many LEAs in the United States, United Kingdom and Europe who buy and operate ‘ready-made’ solutions designed by non-LEA parties, such as ‘PredPol’ or ‘PreCobs’ (Hardyns & Rummens, 2018, pp. 208–209).

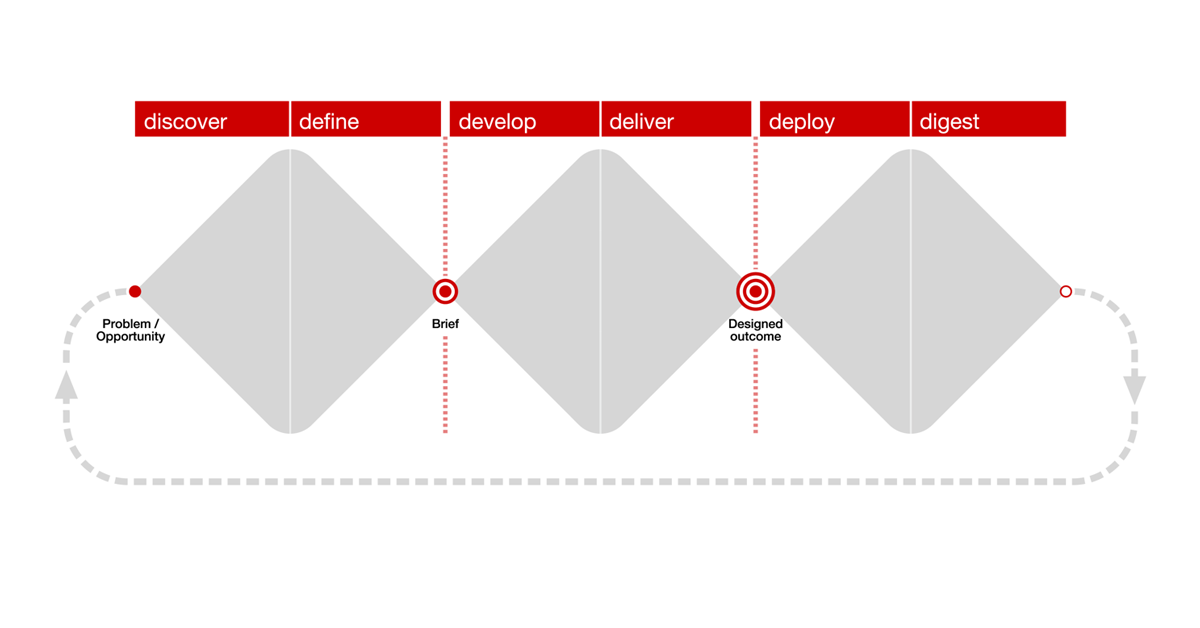

The ‘human-centred’ CCI design process consists of four diverging and converging phases, through which the landscape is studied and reviewed, and in which design requirements are captured through interdisciplinary and transnational research. These phases — discover; define; develop; and deliver — are the first four visualised in the ‘Triple Diamond model’ (Davey & Wootton, 2011, 2017).

Fig. 1 – The triple Diamond model,as developed by Davey & Wootton (2011).

Most predictive policing systems currently in use are location-focused, applying the so-called ‘near-repeat approach’. This theory is based on criminological insights suggesting that crime repeats close to where it occurred in the recent past (Gstrein et al, 2019, p. 86). Through combination of historical crime data with additional sources, such as weather conditions, street grid patterns, or average income in a neighbourhood, maps with ‘hot spots’ are being calculated. These hot spots indicate locations where crimes such as burglary, or vehicle theft might take place. However, predictive policing systems can also focus on individuals (Sommerer, 2020, pp. 29–115). Several interlinked ethical, legal, and social challenges are associated with the design and use of conventional predictive policing systems (Gstrein et al, 2019, pp. 86–93).

Fig. 2 – Summary based on (Gstrein et al., 2019, pp. 86–93).

From forecasting, to prevention, to information sharing

One of the biggest drawbacks for predictive policing is that there is no unambiguous empirical evidence that predictions actually lead to reduced crime rates (Gstrein et al., 2019, pp. 89–90). Furthermore, predictions are only possible if enough data is available for a particular area or a particular crime, which are important reasons to understand why predictive policing has been evaluated very critically recently in Switzerland (Kayser-Bril, 2020). These aspects together with concerns about amplification of existing bias in historic crime data, as well as fears about ‘self-fulfilling prophecies’ resulting from more agents permanently searching for crimes in the same neighbourhoods, have seriously affected the attractiveness of predictive policing. Some experts conclude that the promise of predictive policing fails to acknowledge the complexity of crime and the societal aspects with which it is associated (Richardson et al., 2019, pp. 225–227).

While such considerations are typical for debates led by civil society and academics, our research in the CCI project additionally found challenges relating to the effective implementation of data-focused systems in established policing practice and procedures. In simple terms, many agents have difficulty trusting an algorithm-based prediction over their instinct and experience. Furthermore, seemingly mundane aspects, such as the convenient availability of a tablet or a smartphone with which to put data in context, turn out to be crucial in making predictive policing operational.

The question arises whether the focus of data-driven policing should actually be on the value and accuracy of predictions. These forecasts may help to prevent crime from occurring in the first place, but this could equally be achieved by other means, such as a stronger emphasis on community policing. Whereas threat models may be used to justify preemptive use of police force to lower the likelihood of crime, such efforts do little to address the underlying causes of crime, which need to be taken into account when designing interventions. Ideally, a causal crime model would also support the deployment of more long-term preventative means of crime reduction, dealing not only with proximate causes but also intermediary and root causes.

Certainly, augmenting existing human assumptions and instincts with accurate data that fits the context of a situation might aid in mitigating potential prejudice. However, this can only be achieved if data is created, analysed, shared and interpreted accurately, with clear focus, and purpose. Hence, rather than emphasising the sheer volume of data that can be used to produce predictions by algorithms, it may be more advisable to consider how the right information at the right time can be shared among relevant units of an LEA to make policing effective and legitimate.

Redefining design requirements

The case of predictive policing demonstrates that the adoption of automated decision-making in public administration may result in the development of technical systems built on assumptions that do not hold true in reality. On the one hand, this is demonstrated by the value (or lack thereof) of the predictions themselves. It is practically impossible to understand how accurate they are, and the complex creation through algorithms can only be understood by experts. This combination might result in the creation of a ‘black box society’ (Pasquale, 2015). On the other hand, embedding technology into established practices of LEAs is not easy. Many practical, real-world factors have to be considered and addressed. Agents will only rely on technology if they can really trust it, which may question the investment in such systems in the first place.

An answer to these dilemmas may lie in the careful assessment of design requirements. When it comes to the use of data and the establishment of a sound data culture, member states of the European Union may even have an advantage over other regions of the world, since data protection and data management frameworks are well developed (Gstrein et al, 2019, p. 87). Additionally, more and more guidance on the ethical challenges associated with the use of Big Data has become available (Završnik, 2019; Zwitter, 2014). Although the promise of Big Data lies in the hidden patterns of data that might only be recognisable by algorithms due to the sheer volume of information, it is still advisable to think very carefully in which direction we look for answers. After all, asking well-framed, clear research questions will result in more useful answers, not just vague assumptions and instructions. In this sense, the principle of purpose limitation, which is one of the cornerstones of traditional data protection law (Ukrow, 2018, pp. 242–243), remains crucial for systems using advanced algorithms.

Conclusion

As promising as automated decision-making based on the development of highly sophisticated algorithms might appear , it is not inevitable that such systems will become omnipresent. They will have to prove their added value over the long-term for each purpose and application. In the context of predictive policing, it seems that conventional approaches to its design are over simplified and based on wrong and non-aspirational assumptions,. This has led to communities in cities such as Los Angeles demanding that they be abandoned (Ryan-Mosley & Strong, 2020). This instance is particularly noteworthy, since predictive policing was first developed in Los Angeles and has a comparatively long history of use there. Additionally, similar algorithm-based technologies, such as facial recognition, have recently been banned in several US-American cities (Lecher, 2019), and a new report by the Canadian civil society organisation, Citizen Lab, calls for moratoriums for the use of technology that relies on algorithmic processing by LEAs of historic mass police data sets (Robertson et al, 2020).

In this article, we have outlined ethical, legal and social challenges associated with the use of conventional predictive policing systems. We see a need for redefining the requirements of such systems, which can be done by adopting interdisciplinary and human-centred design processes. These are capable of taking diverse views and aspirations of a broad set of stakeholders, historic facts, and common societal objectives into account. Through the course of this exercise, it becomes evident that the focus of such systems should shift from prediction, to prevention and information-sharing. Ultimately, and if designed carefully, algorithms may be able to support better decision-making based on a granular collection of facts and comprehensive evaluation and interpretation, which is able to take context into account. However, transparent and explainable artificial intelligence is still far away, and currently available systems have so far failed to prove their reliability. Hence, they are not yet ready to become key actors in the justice and security domain.

Additional material

Video: Predictive Policing – Continue, drop, or redesign?

CCI Factsheet: Ethical, Legal & Social issues impacting Predictive Policing

CCI Factsheet: Predictive Policing

References

Davey, C. L., & Wootton, A. B. (2011). "The Triple Diamond model" in Designing Out Crime – A Designers' Guide, Design Council: London, UK. https://www.designcouncil.org.uk/sites/default/files/asset/document/designersGuide_digital_0_0.pdf

Davey, C. L., & Wootton, A. B. (2017). Design Against Crime. A Human-Centred Approach to Designing for Safety and Security. In R. Cooper (Ed.), Design for Socially Responsibility Series. Routledge.

Gstrein, O. J., Bunnik, A., & Zwitter, A. (2019). Ethical, legal and social challenges of Predictive Policing. Católica Law Review, 3(3), 77–98.

Hardyns, W., & Rummens, A. (2018). Predictive Policing as a New Tool for Law Enforcement? Recent Developments and Challenges. European Journal on Criminal Policy and Research, 24(3), 201–218. https://doi.org/10.1007/s10610-017-9361-2

Kayser-Bril, N. (2020, July 22). Swiss police automated crime predictions but has little to show for it. AlgorithmWatch. https://algorithmwatch.org/en/story/swiss-predictive-policing/

Lecher, C. (2019, July 17). Oakland city council votes to ban government use of facial recognition. The Verge. https://www.theverge.com/2019/7/17/20697821/oakland-facial-recogntiion-ban-vote-governement-california

Meijer, A., & Wessels, M. (2019). Predictive Policing: Review of Benefits and Drawbacks. International Journal of Public Administration, 42(12), 1031–1039. https://doi.org/10.1080/01900692.2019.1575664

Pasquale, F. (2015). INTRODUCTION: THE NEED TO KNOW. In The Black Box Society (pp. 1–18). Harvard University Press; JSTOR. https://www.jstor.org/stable/j.ctt13x0hch.3

Richardson, R., Schultz, J., & Crawford, K. (2019). Dirty Data, Bad Predictions: How Civil Rights Violations Impact Police Data, Predictive Policing Systems, and Justice (SSRN Scholarly Paper ID 3333423). Social Science Research Network. https://papers.ssrn.com/abstract=3333423

Robertson, K., Khoo, C., & Song, Y. (2020, September 1). To Surveil and Predict: A Human Rights Analysis of Algorithmic Policing in Canada. The Citizen Lab. https://citizenlab.ca/2020/09/to-surveil-and-predict-a-human-rights-analysis-of-algorithmic-policing-in-canada/

Ryan-Mosley, T., & Strong, J. (2020, June 5). The activist dismantling racist police algorithms. MIT Technology Review. https://www.technologyreview.com/2020/06/05/1002709/the-activist-dismantling-racist-police-algorithms/

Sommerer, L. (2020). Personenbezogenes Predictive Policing. Nomos.

Ukrow, J. (2018). Data Protection without Frontiers? On the Relationship between EU GDPR and Amended CoE Convention 108. European Data Protection Law Review, 4(2), 239–247. https://doi.org/10.21552/edpl/2018/2/14

Završnik, A. (2019). Algorithmic justice: Algorithms and big data in criminal justice settings. European Journal of Criminology, 1477370819876762. https://doi.org/10.1177/1477370819876762

Zwitter, A. (2014). Big Data ethics. Big Data & Society, 1(2), 2053951714559253. https://doi.org/10.1177/2053951714559253